This post is a cross post from blog.bbv.ch

This is the handout blog post for the presentation I give at the internal bbv Techday on the 28th november. The presentation is targeted for beginners of the REST concept.

Let’s start!

Could you imagine life without having the sheer amount of information of the web at your fingertips?

I can’t!

When I analyze my usage of the web during the day I see that I read my RSS feeds daily to get up to date information about what’s going on both in IT and the real world. I buy tickets for the train or bus either directly in the browser or in an App on my iPhone and much more. So the web changed the way we produce and share information dramatically.

So what makes the web such a successful application platform?

If you we look behind the web we see that the architecture of the web is nothing more than thousands of simple, small-scale interactions between agents and resources that use the founding technologies of HTTP and the URI.

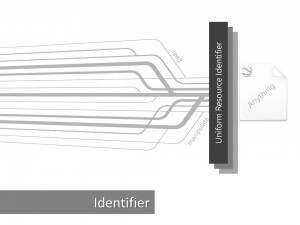

The key concept or fundamental building block is a resource. Almost anything can be modeled as a resource and made available for manipulation over the network.

In order to be able to manipulate a resource the web provides the notion of resource identifiers under the term URI (Uniform Resource Identifier). It makes a resource uniquely addressable. The relationship between URIs and resources is many to one. A resource can have multiple URIs which provide different information about the location of a resource (i.e. http://…, file://…, ftp://…).

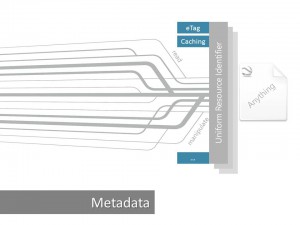

Furthermore a resource can have resource metadata. Metadata provides additional information such as location information, alternate resource identifiers for different formats or entity tag information about the resource itself. A very important aspect in order to allow the web to scale out relies on the resource metadata. It is the caching mechanism. Caching enables to store copies of frequently accessed data in several places along the request-response path. If any cache along the request path has a fresh copy of the requested representation, it uses that copy to satisfy the request. When caching is used the metadata should include directives for caching such as the freshness or staleness of a resource. Examples of such directives are Expires, Cache-Control, ETag and Last-Modified. Stale resources must be revalidated with the origin server before it can be used to satisfy any further request. Revalidation takes places by issuing so called conditional requests to the origin server with either ETag and If-Match and If-None-Match headers or Last-Modified values with If-Unmodified-Since and If-Modified-Since headers. Conditional requests only exchange header information and only if the resource representation (will be discussed shortly) has changed since the last request the body is transferred.

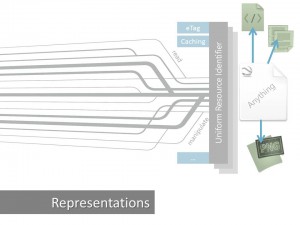

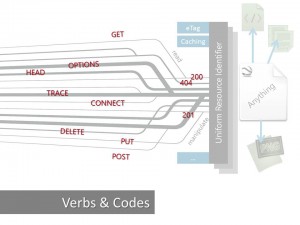

Besides having metadata a resource can have many representations such as XML, JSON, Image and more. The representation shows a resource state at a point in time and can be any sequence of bytes. The representation of a resource is usually negotiated during the content negotiation process and therefore determined at runtime. But how do we interact with those resources? HTTP provides the needed verbs to interact with resources.

The currently supported verbs are GET, POST, PUT, DELETE, OPTIONS, HEAD, TRACE, CONNECT and PATCH. We look into what the different verbs mean and what their expected behavior is (especially GET, POST, PUT and DELETE) during the next demo. In addition to verbs, HTTP also defines a collection of response codes, such as 200 OK, 201 Created and 404 Not Found. These two together build a general framework for interacting with resources.

https://github.com/danielmarbach/RestFundamentals

Let us explore those principles in the next demo!

We see that the web is a nice example of a distributed architecture which is inherently scalable by using addressable resources, representations of resources, means of communication and more. The web as it stands out today provides a simple interfacing to complex applications which are running behind the scenes and provides loose coupling by hiding concrete things like databases and entities behind one single concept:

A resource! And yet we haven’t even talked about REST but solely about the web architecture itself!

REST aka Representational State Transfer was first mentioned in Roy Fielding’s dissertation in which he analyzed the success story of the web itself and came up with a series of constraint which made the web a successful application platform. The idea is that if you apply those constraints when you design your own systems you benefit of all the things the web offers and potentially achieve what the web achieved. Simply put REST is nothing more than a series of constraints. Because of that REST is an architectural style.

If you follow all constraints designed by the REST architectural style your systems is considered RESTful.

But before we dive further into REST we need to look at another aspect which makes the web a highly scalable and distributed architecture.

It is Hypermedia or hypermedia as the engine of application state (summarized under the awful acronym HATEOAS)! The idea is simple and yet very powerful. A distributed application makes forward progress by transitioning from one state to another, just like a state machine. The difference from traditional state machines, however, is that the possible states and the transitions between them are not known in advance. Instead, as the application reaches a new state, the next possible transitions are discovered. If hypermedia and the constraints are applied well, you often hear the term: “web friendliness”. But what makes something web-friendly?

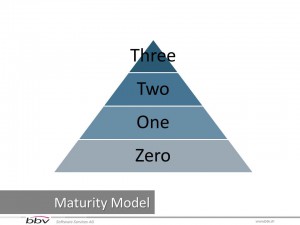

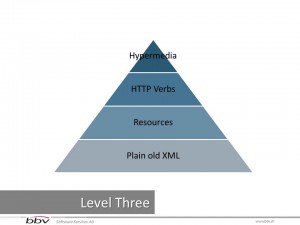

Leonard Richardson proposed a classification model which allows classifying the maturity level of applications and services in the web. The classification is based upon the usage of URIs, HTTP and hypermedia.

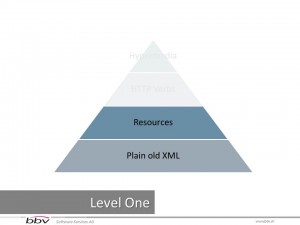

Level Zero describes services which have a single URI and only use HTTP POST to invoke behavior on the server. This is often referred as Plain Old XML (POX) or XML-RPC and also widely seen in WS-* SOAP payload based protocols.

Level One describes rudimentary services that expose many URIs containing numerous logical resources but only use a single HTTP verb. Difference between level one and level zero services is that level zero services often tunnel all interactions through a single resource. Level One services insert the operation names and parameters into an URI and transmit that URI to a remote service with the HTTP GET verb. It tackles the question of handling complexity by using divide and conquer, breaking a large service endpoint down into multiple resources.

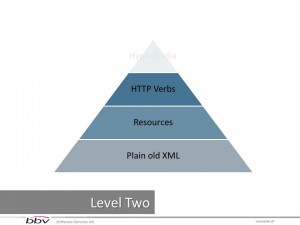

Level Two describes services which have numerous URI-addressable resources but restrict the usage of HTTP verbs to the basic CRUD operations GET, POST, PUT and DELETE. It introduces a standard set of verbs so that we handle similar situations in the same way, removing unnecessary variation.

Level Three describes the most web-aware level and is the only true follower of the hypermedia approach. Level Three is the only level which is considered RESTful because it introduces discoverability, providing a way of making a protocol more self-documenting.

https://github.com/danielmarbach/RestFundamentals

Enough babbling! Let us dive into an example

This should give you a clear understanding of the fundamentals of REST. It’s simply the principles of the scalable web applied to your software architecture!

Not convinced yet? Do you want to dive deeper into the constraints of REST? Do you want to learn how to define resources in your applications and how to define transitions between those resources? How to version and much more? No problem! Vote for my next presentation about REST either at bbv Forum or the next Techday!

Sources

- Hadi Hariri – REST with ASP.NET MVC http://vimeo.com/43808786

- Hypermedia APIs – Jon Moore http://vimeo.com/20781278

- Architecture of the world wide web, Volume One http://www.w3.org/TR/webarch

- REST in Practice – Hypermedia and Systems Architecture, Oreilly

- RESTful Webservices –Oreilly

- Richardson Maturity Model http://martinfowler.com/articles/richardsonMaturityModel.html

- REST APIs must be hypertext driven http://roy.gbiv.com/untangled/2008/rest-apis-must-be-hypertext-driven#comment-718

- Hypermedia APIs – Jon Moore http://vimeo.com/20781278

- Versioning of REST APIs http://www.lexicalscope.com/blog/2012/03/12/how-are-rest-apis-versioned/

- Architectural Styles and the Design of Network-based Software Architectures by Roy Fielding http://www.ics.uci.edu/~fielding/pubs/dissertation/top.htm

- Design Hypermedia APIs http://www.designinghypermediaapis.com

- Put vs Post http://jcalcote.wordpress.com/2008/10/16/put-or-post-the-rest-of-the-story/ and http://stackoverflow.com/questions/630453/put-vs-post-in-rest

- Images legally licensed from istockphoto.com or sxc.hu

RT @planetgeekch: REST Fundamentals: This post is a cross post from http://t.co/W5CYzREL This is the handout blog post for the pre… ht …