This is the presentation I gave at the conference Basta! in September 2012.

Before we can talk about Agile quality assurance, we have to make a step back and take a look …

… at the goals of Agile software development. Our Agile quality assurance strategy should support these goals:

First, we want to be able to adopt to changes quickly. Changes are everywhere: business environment, technologies and people involved can change.

Second, we want to minimize time-to-market. The sooner we can put the product into market and make money, the better.

Now, what impact do these two goals have on quality assurance.

We start with a look at why traditional quality assurance fails in achieving the above two goals.

The main part of the presentation is about where bugs sneak into our products and how to prevent them.

We finish with the opportunities, Agile quality assurance provides us with.

What’s wrong with traditional quality assurance?

What’s wrong about focusing primarily on testing?

What’s wrong with thoroughly testing a product before putting it out to the users?

Testing a product takes a lot of time. Traditionally, testing takes place after development has finished. But a three month testing phase for example is simply much too long to have a reasonable time-to-market. The feedback cycle back to development is so long that the developers probably don’t even know anymore, what exactly they built and now have to fix. The result is, that improving quality takes again a lot of time.

Additionally, it is in most cases much cheaper and faster to prevent bugs from ever being introduced than to hunt them down and fix them – and probably introducing some new bugs while doing so.

Ask yourself: what did the last bug you found on a production system cost you? How many hours could have been spent on activities preventing this bug from happening?

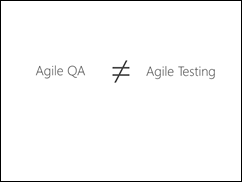

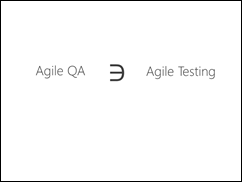

But, let’s talk about Agile quality assurance now. A common misunderstanding is that Agile quality assurance is the same as Agile testing. But that is not true.

Agile testing is a small part of Agile quality assurance. We’ll see the other parts in the rest of this presentation.

In order to have a successful quality assurance strategy, we need to know where things can go wrong and result in a product of low quality.

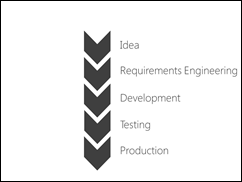

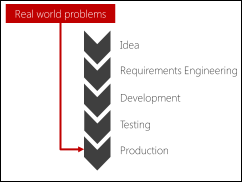

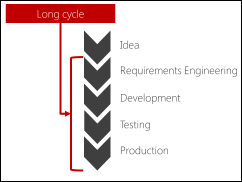

Every piece of software is created by passing through the phases of

- having the idea to built it,

- gathering the requirements about what actually to build,

- developing it,

- making sure it runs as expected by testing it, and

- putting it into production so that it can be used

- This is true for every kind of methodology. They just vary in the size of the piece of software built at once (one piece flow in Kanban, product increment in Scrum, product in “waterfall”).

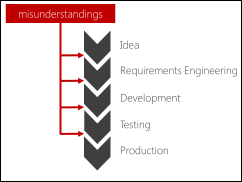

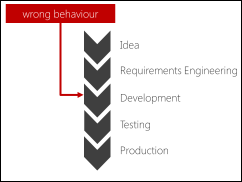

But a lot of things can go wrong here:

There can be misunderstandings in the space between the steps.

When information is passed in the form of documents, people often interpret them differently. You can try as hard as you want, but it is nearly impossible to describe details as they are needed to describe software in a way so that they can only be interpreted in one way. People start to assume things and aggregate what is written with their own background knowledge.

This effect is even amplified when the phases are executed by different persons. When they pass information – or documents – from one to another, a lot of knowledge is lost. The worst possible scenario is when the person of an earlier phase is not available anymore to clarify questions of the people working in a later phase.

All the arising misunderstandings lead to a product not doing what it should and doing this poorly. And of course, a lot of time is wasted to get things fixed.

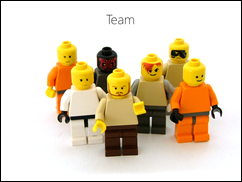

To prevent this from happening, all involved persons have to work as a team from the beginning to the end. All information is contained within the team and can therefore not get lost. If for example a tester has a question about a requirement, the requirements engineer (or ProductOwner) is available in the team and can answer the question. Furthermore, the developer can join the discussion and give input about how it is implemented. Thus providing further insights into the problem.

The team approach, reduces misunderstandings and resulting delays to a minimum.

In my team, all team members are present during planning meetings and discussions. This sounds expensive at first, but the misunderstandings and the need for explaining stuff multiple times would cost us much more.

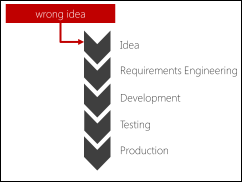

Having the wrong idea is the worst thing that can happen. Everything that follows after going for the wrong idea is just useless.

Who – apart from software developers building a continuous integration alarm system – would buy a lava lamp nowadays? There is simply no market for this idea.

It is part of quality assurance to make sure that we build something that is useful to somebody.

The best approach to this problem is to fail fast in case you do the wrong thing. Feedback about your idea should be as fast as feedback in the game Jenga, in which you have to take a brick from the lower part of the tower and put it on top – without making the tower fall.

Therefore, put your product in the hands of the user as soon as possible by focusing on a minimal set of features.

In my project we normally deploy our latest and greatest product as soon as possible to a selected group of customers. They provide us with great feedback about how to make the product better or sometimes what feature really isn’t worth their money. With this feedback we can improve our product before releasing it to a broader group of customers.

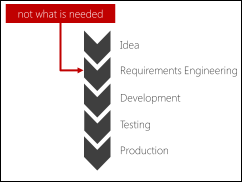

A lot of bugs happen in requirements engineering. What is specified is not what is needed:

The requirements engineer tries to put into requirements what a stakeholder says she wants. However, if there is no quick feedback from the stakeholders, things go often wrong because of the different mental models and knowledge backgrounds.

The only thing to keep requirements bugs under control is to frequently show all the stakeholders what is built and to check whether this is what they wanted. In Scrum, the main purpose of the Sprint Review meeting is to align stakeholder expectations with the team’s understanding of what is to be built.

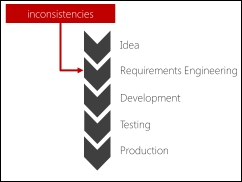

Another problem with requirements is that they often are not consistent.

Requirements documents tend to get thick.

When the requirements document reaches a certain size, it is not anymore possible for a human brain, to check it for consistency.

Big requirements documents contain inconsistencies that cannot be spotted easily. They just pop up during development – or while cooking with a non-matching set of pan and lid.

These inconsistencies result in problems during development. Either one side of the inconsistency is not fulfilled, or consolidating the requirements takes time. Time our product is not in the hand of the users.

The solution to this problem is rather simple. Your requirements document – or Product Backlog in Scrum – should never be bigger than what a single human can overview and check for consistency. In the picture, you can see the Product Backlog of one of my former projects. The box has a very limited space to keep cards. On each card there is a User Story or an Epic – a place holder for something bigger than a User Story. Agile requirements engineering focuses on putting effort on the requirements that are implemented in the near future, while keeping the requirements that will be implemented later at a very coarse level. With time, the coarse requirements are broken down into User Stories, resulting in a backlog of always roughly the same size. A size that can easily be check for consistency.

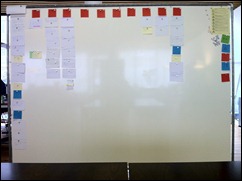

This is another example of my current project. The columns (red cards) represent features of the software and the white cards are User Stories or Epics.

The longer a product lives, the harder it gets to know what the software is capable of. Therefore, it gets more and more difficult to specify new features in alignment with existing functionality.

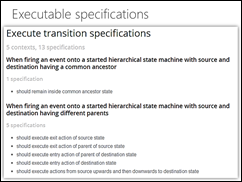

That is the reason why we “translate” our requirements – User Stories with acceptance criteria – into executable specifications. The sum of all these specifications describe almost all functionality in our system. Whenever we introduce something that breaks an existing functionality, one of the specification tests fails and alerts us of an inconsistency in the requirements. Therefore, we never ship software that breaks old behavior, unless it is intended of course.

When thinking about bugs, development is probably the first phase that comes to your mind.

Writing code is difficult and as long as humans write code, mistakes will happen. With the specifications I mentioned earlier, the software will behave more or less as expected. But bugs hide in the details. Details that cannot be covered with specifications. Otherwise, the specifications would get as complicated as the code itself and therefore bugs would hide there, too.

At home, I have a small garden. Believe me if I say that dark places attract bugs. A lot of bugs, actually.

The dark places in our software are called technical debt. Things that should be design or implemented simpler or better understandable. The more technical debt in a system, the easier it gets for bugs to hide in there.

Furthermore, both technical debt and bugs increase pressure on the available time of a team because they both take away time to do things in a quality way. This results in even more technical debt and bugs. This spiral has to be broken early or it can take a project down completely.

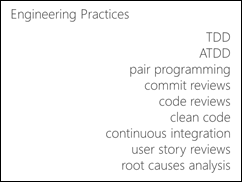

The only way to prevent this spiral effect is to follow good engineering practices like the ones listed here.

TDD – Test Driven Development – helps to prevent a lot of bugs from ever occurring and helps to get a design that keeps maintainable.

ATDD – Acceptance Test Driven Development – helps communication between business representatives and developers and gives you an executable specification, which again helps maintaining your software.

With pair programming, your code is not only reviewed in real time, there is also knowledge transferred between the developers. The better understanding of technology and domain helps again to prevent bugs from ever being introduced.

A commit review happens whenever one of my team colleagues wants to commit code into the shared source code repository. Then he calls another team member to perform a commit review. The two developers go once again through all changes made. This results in discussions about design and edge cases. Again, better quality results. Another effect is, that there is no single line of code that is understood by only a single person in the team.

Code reviews are the classical way to check code, we use them only for very complicated parts of our code because they are very time consuming and with pair programming and commit reviews, code is already reviewed at least by one person.

The goal of Clean Code is that the code is as simple and understandable as possible. Simpler code is less buggy. If it is easy to understand, bugs can more easily be spotted.

Continuous integration provides us with frequent feedback about whether all our tests pass and therefore, whether we introduced bugs in our software.

A User Story review happens whenever all tasks to fulfill a User Story are done. All involved developers stand together a go through the tasks once more. During the discussion, wrong assumptions or misunderstandings can often be spotted.

Finally, root causes analysis is used when something goes wrong. It is important to look for causes and if possible, to eliminate them. Otherwise, the same mistakes are made over and over again.

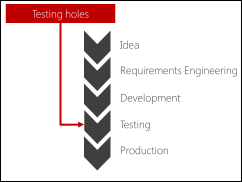

There won’t be any bugs be introduced during testing, of course. But traditional testing has a high risk to let bugs through to production. But why?

Typically, testers create their test cases from the requirements. The result is more or less a checklist. The problem is that the requirements never cover 100% of what the developers created to get a running system. Therefore, there will be small or sometimes even big parts of functionality that are not tested.

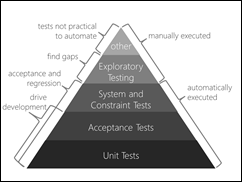

An Agile testing strategy contains very different kinds of tests.

Unit tests are written by the developers using Test Driven Development. There is almost no code written that is not covered at least by a unit test. Therefore, we can assume with a very high probability that the individual parts work correctly.

Acceptance tests written by the developers using Acceptance Test Driven Development augment the unit tests by providing feedback about whether the software actual does what is expected from the business.

These two test-driven kinds of tests are the driver of development.

Testers build upon them by introducing tests for non-functional requirements – constraints – on the system level. Because the acceptance tests already provide enough information about whether the functionality provided by the software is working. These tests focus not on functionality at all, but on whether the system as a whole performs as expected. This reduces the number of needed tests dramatically.

The above three kinds of tests are fully automated so that they can be run on a continuous integration server all the time, on every commit. Therefore, they can be used as a regression test suite.

Not every possible test can be or should be automated. People are very good in spotting visual, usability and consistency problems. Therefore, exploratory testing is a very important part. However, the tester can focus on special cases because the above tests already prove that the system is largely working as expected. The main purpose is to find gaps in the test coverage by the above three kind of tests.

Finally, there are other manual tests like user acceptance tests that complete the Agile testing strategy.

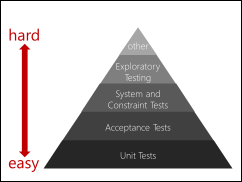

When we want to be really Agile and want our software be capable of adopting to changes easily, it is very important that our test harness can change easily, too.

Unit tests are very easy to change because they cover very little functionality.

Acceptance tests are a bit harder but each test covers only a single functionality. Therefore, for a single requirements change, only a few acceptance tests should be involved.

The higher up you get in the pyramid, the harder it gets to change the tests. Therefore, there have to be less tests at the top as at the bottom. Otherwise, you spend your time updating your tests instead of delivering new functionality. It is impossible to update a 100 page test script every Sprint. Coded, automated tests should take care of this.

Only deployed software provides value. But a lot of things can go wrong there, too.

Like weather, it is not fully predictable how a software system will behave once it is deployed. Things that run smoothly during development and testing, suddenly stop working, the hardware seams to go wild and users start to complain.

The only solution to this problem is to deploy the software frequently – at least to a testing environment.

With Continuous Deployment, the test system is updated continuously, on every commit to the source code repository.

Of course, this is only of real value when your test environment matches your production environment very closely. An added benefit is that you can give all your stakeholders access to the testing environment.

In my project, my customer installs the product increment of every Sprint on the machines of a selected group of power users. Believe me, that motivates my team quite a lot to deliver quality. Or are your developers fond of taking calls of customers reporting bugs?

The last source of problems is a long duration from requirements engineering to production.

If this duration is long, the environment of the product may change and it is not possible to react quickly without sacrificing quality by dropping one of the above mentioned practices.

Another problem with a long cycle from requirements to production is time pressure. Developers and testers tend to take short-cuts when they are confronted with time pressure.

With a short cycle time, less can go wrong at once because there is less work in progress than with a long cycle time.

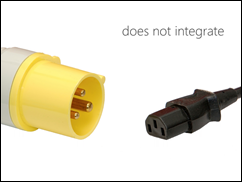

A short cycle time also minimizes integration problems. Integrating small things is easy. Integrating work done over a long period of time quickly gets frustrating and is an invitation for bugs to join the party.

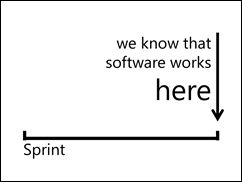

The solution is to shorten the time it takes from requirements engineering to production to a small time frame. In Scrum this is the Sprint. At the end of the Sprint, everything has to work and be deployable. If your environment changes, or time pressure comes in, you know that what you built up till now works.

In order to really know that your software works, Continuous Integration and Continuous Deployment have to be in place.

Continuous Integration tells you whether all your tests pass.

Continuous Deployment makes sure that your software can be installed.

Let’s sum up what we’ve heard.

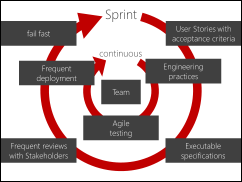

At the center of Agile Quality Assurance is your team. The team continuously applies good Engineering Practices, has an Agile Testing strategy and deploys the software frequently.

Requirements are passed to the team as User Stories with Acceptance Criteria, that are transformed into Executable Specifications. The stakeholders are frequently involved to provide feedback and if we are completely on the wrong track we fail fast and start over. In Scrum this happens all inside every Sprint.

To finish this presentation, let me show you the consequences of following this strategy for Agile Quality Assurance:

You need a team with great skills.

TDD, ATDD, exploratory testing, CI, CD, Agile Requirements Engineering, they all are hard to master. Therefore, you have to invest in your team and provide them the opportunity to learn. They learn better faster than slower. See “learn as a team” for more information.

One of the great benefits of our Agile quality assurance strategy is that our software can adapt to changes in the requirements due to changing business needs very easily.

There are no developers fearing to change the code. The tests provide them with a safety net. Even architectural changes are possible because the Acceptance Tests are decoupled from the actual architecture (see “ATDD” for more information).

Because we know that our software works and because we can check it any time we want with very little effort, we really can evolve our software feature per feature. Thus we achieve the first goal of Agile software development I stated at the beginning: Adopt to change.

This ultimately results in much higher speed of development. Today, we can not only produce software with higher quality, but we can deliver it much faster, with smaller time-to-market – our second goal of Agile software development.

Thanks for reading!

I’d be very happen to read your thoughts in the comments section.

I thought this article was very good reading, and an accurate assessment of the case for Agile/Scrum/TDD/etc. I think my only question is: where do performance, stress, negative, fuzz tests (etc.) come in?

@Jeff

I summarised these kinds of tests as Constraint Tests because they check against needed constraints in performance and other non-functional properties of a system.

Hi Urs,

I don’t think it’s proven that time-to-market is reduced when using Agile. In fact, many project managers argue that projects managed with Agile never get finished. Even a project dong using Agile successfully is managed in a silo that is part of a bigger waterfall project.

One thing I would like to mention, you might want to put the text next to the images, the layout of the page does not look very good.

By the way, please take a look at this post, the limitations of Agile: http://www.pmhut.com/limitations-of-agile-software-development

@PM Hut

I can only talk about my experiences and the feedback I get from projects I’m involved. And for us, switching from some waterfall-ish process to an Agile process (Scrum in my case), there was a dramatic reduction in time-to-market.

The main reason was that the phases requirements-engineering, development and testing were switched from being sequential to being overlapping. So even if the total amount of worked on hours remains the same, the duration is less.

And of course, we got feedback earlier, which reduced working on the wrong thing. Again reducing the duration until a release.

But I agree with you that just following an Agile process does not result in less time-to-market automatically. This requires a change in the mindset bigger than a limited view on development alone. Only if the complete process from idea till deployment is optimized, less time-to-market can be achieved.

And yes, Agile does not work everywhere. But for us it works great.

However, I really can’t see why project managers would say that with Agile, projects don’t get finished. Agile means get-things-done-as-fast-as-possible. I guess that there was a lack of proper release planning, prioritization and focus on a minimal marketable product.

And thanks for the link and for reading our blog.

Software Reliability Engineering (SRE) is the traditional method of assessing software reliability. In practice, this method has turned out to be fairly reliable but it assumes an overall methodology of unit test, then system test, and within system test there is a sequence of testing where new features are tested first, then bug fixes, then one or more rounds of regression. The idea of the system test plan is to replicate the conditions that would exist in the ops profile of the target deployment environment. AGile and DevOps don’t lend themselves easily to the SRE method because it just takes too long, but there is currently no reasonable replacement for SRE in the Agile context that produces the same high quality results.