cross-post from http://blog.bbv.ch/2013/06/10/legacy-code-and-now-what/

Every day is a ground hog day. It is eight o’clock in the morning. You come into the office, look at the Scrum board of your current project and pick the next task of the user story with the highest priority. You sit fully motivated in front of your computer, open up your favorite IDE and start to implement the task. But wait! Something is wrong! First, you have to fully grasp the code you tend to put the feature into. But there is a lot of code in that area, which needs to be understood, analyzed and put into context with the feature you are implementing. You start drawing a sequence diagram of what is going on in that code but the more you dive into it the more confusing it gets. The motivation decreases, frustration chimes in. Maybe someone in your team can help you understand the code. You call your colleague, who immediately sits down with you trying to help understanding. Minutes later your friend has no clue either and tells you to “just freaking hack it into the code”. Despite feeling a bit dirty you have no other chance than to hack it. The feature has to be completed better yesterday than tomorrow! But you are a hero, aren’t you?! You can do it! The motivation comes back and finally you get the task done and the feature you are working on is ready to be demonstrated during the sprint review.

The sprint review starts, your product owner and your team members are gathered around the demo computer. You are first to show your new feature to the product owner. The feature works and fully impresses your product owner. Now, it’s your team mate’s turn to present the feature he has been working on. He starts presenting, but suddenly… BOOOM… the application crashes. Your colleague almost freaks out because he wanted to receive a pat on the back from the product owner, too. He blames you for killing his feature. The product owner gets angry and suddenly starts complaining about your team making more mistakes than pushing out new features. Your team mate finally says what your team always had in mind: He demands a rewrite of the whole software because you have reached a state where you cannot add any new features without severely damaging old ones. But guess what! Your product owner demands more features nonetheless. And he wants them now!

Do you recognize the situation? Let us analyze what happened here.

Implementing new features takes forever and they break existing code because of a large historically grown code base, which is neither well structured, nor clean and expressive. And what’s worst, planning gets impossible because we cannot give reliable schedules about how long it will take to implement a feature. Without the ability to plan, we cannot decide anymore, whether a feature is worth implementing or not, and the project risk gets uncontrollable.

By and by, the team gets frustrated of working with this legacy code. Demands to rewrite the whole mess are getting stronger and stronger.

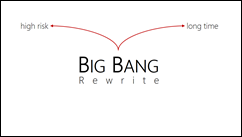

However, striving for the big bang rewrite is almost never a practical solution to the legacy code problem. A big bang rewrite implies a very very high risk. How do you know that the code works the same afterwards and that it doesn’t become just another mess? Furthermore, a complete rewrite takes a long time, in which no new functionality can be shipped to customers. A high risk of losing customers is the result.

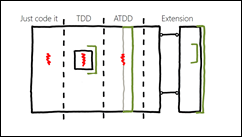

Let’s see how we can add new features to the software without having to rewrite it from scratch. We show you four approaches from which you can choose depending on the problem you need to solve. Normally, a combination of these approaches is used.

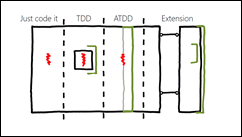

The approaches are Just code it, TDD (Test Driven Development), ATDD (Acceptance test Driven Development) and Extension. First, we introduce the approaches and then show you when to choose which one.

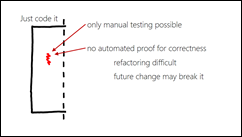

The first approach Just code it is actually nothing new. That is what you have been doing in your legacy projects all the time. The drawbacks of this approach are that in order to verify whether the feature really works you have to fully rely on manual testing. It is not possible to introduce an automated proof for correctness. This makes refactoring such newly introduced features rather difficult and there is a large chance that future changes will break the code.

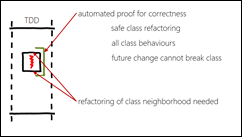

The second approach uses TDD (Test Driven Development) to drive the implementation of the new feature. The new feature is completely developed with TDD giving you an automated proof for correctness for all class behaviors and allows you to safely refactor the new feature. In order to introduce that feature into its surrounding code, refactoring of the class neighborhood might be necessary. Therefore there is still the risk of breaking existing features when introducing a new one.

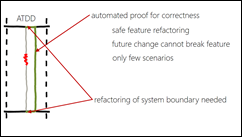

The third approach uses ATDD (Acceptance Test Driven Development). You introduce an acceptance test, which allows to run the feature to be developed. Probably, the system boundaries need to be refactored first so that you can easily execute the feature test and verify the result without having to go through the whole execution stack from UI to the database. Once, the test is in place, the new feature is implemented. When done, the acceptance test passes and can be used as a regression test when future changes are made.

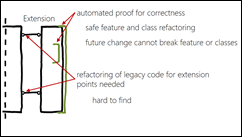

The fourth approach is called Extension. The new feature is implemented independently from existing code as far as possible. The new code only interacts with the existing code through ports. Due to the cleanly defined interface between new and existing code, the new code can be completely developed using ATDD and TDD. The resulting tests provide a complete regression test suite for save future refactorings and changes. The ports have to be introduced into the existing code. This is very often hard to do because the places where to introduce the ports are difficult to find and to align with existing program flow. Changes to the program flow can be made using one of the approaches discussed earlier.

When working with legacy code, we use a mix of these four approaches to develop the needed new features. But when should we use which?

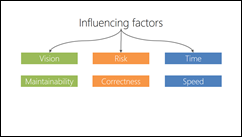

There are three influence factors: vision, risk and time.

The vision of our product says us where to anticipate future changes in the code base.

Not every change to the code comes with the same risks. There are high risk components, either because it’s very important for the system that they run correctly, or the components are very hard to understand and there is a high likelihood of making errors in the code.

Finally, there normally is a time constraint. A new feature has to be implemented within a certain time-box, otherwise it is not worth building.

When we translate these three influence factors into properties of a code base, they are

- maintainability – how well can the code be changed

- correctness – does the code work as expected

- speed – how fast can the implementation be done

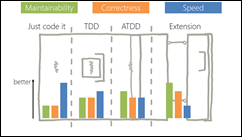

When short-time speed is needed, Just code it is fastest. However, the price for speed is little proof for correctness and low maintainability due to the lack of tests.

Test Driven Development (TDD) gives us better maintainability and a local proof of correctness, but the change takes a bit longer.

Maintainability gets higher when using Acceptance Test Driven Development (ATDD). Correctness stays in the middle area because it is not feasible to test every possible scenario with acceptance tests. The development speed is lower than with TDD because making a system acceptance-testable normally involves some heavy refactoring of the system boundaries.

Building new features as extensions provides us with the best maintainability and correctness of all approaches because the new code is covered completely with unit and acceptance tests. Correctness is not as high as maintainability because we need to introduce ports into the existing code, which often cannot be covered with tests. These benefits have to be paid with a much slower development speed.

Although new features can be developed with less defects, overall development pace is rather slow. This is mainly caused by the intersection points of new, tested code and old legacy code. The code is just overly complex.

To speed up development, we need to improve the existing code base and not just add feature by feature.

We cannot optimize the whole system at once. Such legacy systems are normally just too big and too dense, with little decoupling between components.

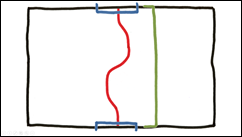

First, we identify the features that the system provides. A feature is triggered from the outside (user, other system or timer) and has an effect that can somehow be detected from the outside (in the diagram, a feature goes from top to bottom for simplicity).

In a legacy system, there are normally quite a lot of features. And they are not structured side by side, but look more like spaghetti. Because we cannot change everything at once, we need to prioritize the features according to our vision of the product, involved risk and time as seen before.

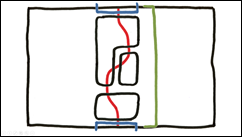

We pick the first feature that we want to clean-up.

We refactor the boundary of our system in such a way that we can introduce an acceptance test for the selected feature. In most cases, a refactoring of the way the user interface talks to the business logic and the database access is needed.

After writing the acceptance test, we can check the feature automatically any time to make sure that we did not break the feature.

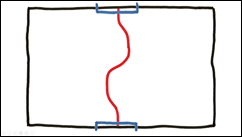

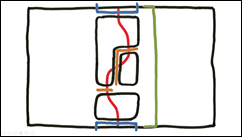

The next step is to identify the components, which provide the feature. Again, we need to prioritize these components according to vision, risk and time to decide which components we want to refactor first.

Then, we refactor the interfaces of the highest prioritized component so that we can isolate it from the rest of the system.

Once a component is isolated, we can write acceptance tests that check only this component. This gives us the possibility to change individual components, or change the way these components are glued together, without having to rewrite the tests.

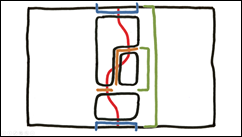

For each component we have to decide whether we want to refactor, re-engineer or keep it.

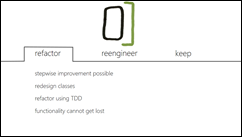

When refactoring, we move the current state of the component step by step by covering more and more contained classes with unit tests. We take this approach when the class design of the component is good enough so that a step-wise improvement is possible. Refactoring has the advantage that there is only a small likelihood of losing functionality hidden in the existing code.

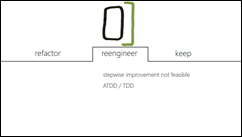

Sometimes it’s faster to replace the whole component by re-engineering it. That means, we start from scratch and use the acceptance tests to drive the implementation. Of course, we use TDD to develop the individual classes.

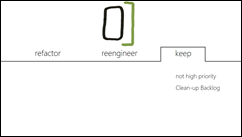

Finally, if we don’t anticipate future changes, we can keep components just the way they are. Or we decide that other components are more important at the moment and just add an item to the clean-up backlog so we don’t forget about this component.

We used this approach successfully in our projects.

We could avoid a big bang rewrite, with its high risk and long time without new features. The continuous, step by step improvement gives us the benefit of having always a running system.

Over time, the system as a whole gets more and more easy to maintain, and new features find their way into our software faster and faster. We get faster mainly because the code base gets cleaner and better to understand, and because we can focus on new functionality instead of losing our heads about not to break existing features.

When our software should make money and fun over a long period of time, we need to treat it like a bonsai and groom it on a daily basis.

If you want to get rid of legacy code, start now.

bbv Software Services provide courses about TDD, ATDD and Legacy Code. See www.bbv.ch/academy

[…] by ursenzler [link] [comment] …read […]

RT @planetgeekch: Presentation: Legacy Code and Now What? by @danielmarbach and @ursenzler http://t.co/lZdykkSUmP

Legacy Code and Now What? http://t.co/83Mnr33mpy

Very nice article!!

Legacy Code and Now What? http://t.co/oBkZ063Qb4 via @zite

[…] Legacy Code and Now What? […]

nice writeup – important topic, often underestimated… (people tend to focus on building new systems, but in reality most sw-engineers maintain code all the time…)

Two related articles I find worth reading parallel to yours:

* http://onstartups.com/tabid/3339/bid/97052/Screw-You-Joel-Spolsky-We-re-Rewriting-It-From-Scratch.aspx

* (from one of the Gurus, Joel Spolsky): http://www.joelonsoftware.com/articles/fog0000000069.html

[…] Folien mit Erklärung befinden sich auf dem Blog planetgeek.ch von Urs […]